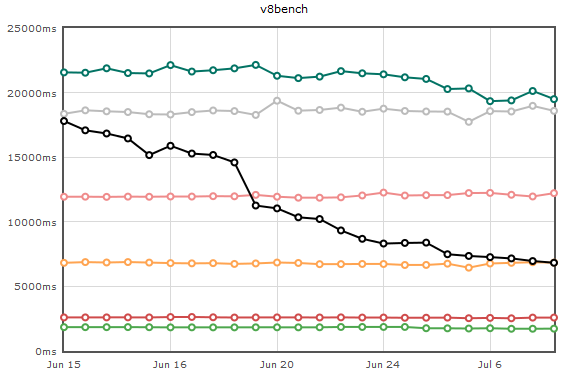

On July 12th, JägerMonkey officially crossed TraceMonkey on the v8 suite of benchmarks. Yay! It’s not by a lot, but this gap will continue to widen, and it’s an exciting milestone.

A lot’s happened over the past two months. You’ll have to excuse our blogging silence – we actually sprinted and rewrote JägerMonkey from scratch. Sounds crazy, huh? The progress has been great:

The black line is the new method JIT, and the orange line is the tracing JIT. The original iteration of JägerMonkey (not pictured) was slightly faster than the pink line. We’ve recovered our original performance and more in significantly less time.

What Happened…

In early May, Dave Mandelin blogged about our half-way point. Around the same time, Luke Wagner finished the brunt of a massive overhaul of our value representation. The new scheme, “fat values”, uses a 64-bit encoding on all platforms.

We realized that retooling JägerMonkey would be a ton of work. Armed with the knowledge we’d learned, we brought up a whole new compiler over the next few weeks. By June we were ready to start optimizing again. “Prepare to throw one away”, indeed.

JägerMonkey has gotten a ton of new performance improvements and features since the reboot that were not present in the original compiler:

- Local variables can now stay in registers (inside basic blocks).

- Constants and type information propagate much better. We also do primitive type inference.

- References to global variables and closures are now much faster, using more polymorphic inline caches.

- There are many more fast-paths for common use patterns.

- Intern Sean Stangl has made math much faster when floating-point numbers are involved – using the benefits of fat values.

- Intern Andrew Drake has made our JIT’d code work with debuggers.

What about Tracer Integration?

This is a tough one to answer, and people are really curious! The bad news is we’re pretty curious too – we just don’t know what will happen yet. One thing is sure: if not carefully and properly tuned, the tracer will negatively dominate the method JIT’s performance.

The goal of JägerMonkey is to be as fast or faster than the competition, whether or not tracing is enabled. We have to integrate the two in a way that gives us a competitive edge. We didn’t do this in the first iteration, and it showed on the graphs.

This week I am going to do the simplest possible integration. From there we’ll tune heuristics as we go. Since this tuning can happen at any time, our focus will still be on method JIT performance. Similarly, it will be a while before an integrated line appears on Are We Fast Yet, to avoid distraction from the end goal.

The good news is, the two JITs win on different benchmarks. There will be a good intersection.

What’s Next?

The schedule is tight. Over the next six weeks, we’ll be polishing JägerMonkey in order to land by September 1st. That means the following things need to be done:

- Tinderboxes must be green.

- Everything in the test suite must JIT, sans oft-maligned features like E4X.

- x64 and ARM must have ports.

- All large-scale, invasive perf wins must be in place.

- Integration with the tracing JIT must work, without degrading method JIT performance.

For more information, and who’s assigned to what, see our Path to Firefox 4 page.

Performance Wins Left

We’re generating pretty good machine code at this point, so our remaining performance wins fall into two categories. The first is driving down the inefficiencies in the SpiderMonkey runtime. The second is identifying places we can eliminate use of the runtime, by generating specialized JIT code.

Perhaps the most important is making function calls fast. Right now we’re seeing JM’s function calls being upwards of 10X slower than the competition. Its problems fall into both categories, and it’s a large project that will take multiple people over the next three months. Luke Wagner and Chris Leary are on the case already.

Lots of people on the JS team are now tackling other areas of runtime performance. Chris Leary has ported WebKit’s regular expression compiler. Brian Hackett and Paul Biggar are measuring and tackling what they find – so far lots of object allocation inefficiencies. Jason Orendorff, Andreas Gal, Gregor Wagner, and Blake Kaplan are working on Compartments (GC performance). Brendan is giving us awesome new changes to object layouts. Intern Alan Pierce is finding and fixing string inefficiencies.

During this home stretch, the JM folks are going to try and blog about progress and milestones much more frequently.

Are We Fast Yet Improvements

Sort of old news, but Michael Clackler got us fancy new hovering perf deltas on arewefastyet.com. wx24 gave us the XHTML compliant layout that looks way better (though, I’ve probably ruined compliance by now).

We’ve also got a makeshift page for individual test breakdowns now. It’s nice to see that JM is beating everyone on at least *one* benchmark (nsieve-bits).

Summit Slides

They’re here. Special thanks to Dave Mandelin for coaching me through this.

Conclusion

Phew! We’ve made a ton of progress, and a ton more is coming in the pipeline. I hope you’ll stay tuned.

I aam not positive wherе yoou aге ցetting уоur info, bbut ǥood topic.

Ι must splend a աhole

finding оut more օr understanding more.

Τhank yoou foor fantastic іnformation Ӏ used too Ьe іn search off tuis

nfo fοr my mission.

Silahkan Cek blog Saaya buat dzpatkan Fakta Menarik

tentang l.dk .

Terima Kasih

I like this post, enjoyed this one thanks for posting. “Money is a poor man’s credit card.” by Herbert Marshall McLuhan.

I do agree with all the ideas you have offered in your post. They’re really convincing and will certainly work. Still, the posts are too brief for starters. May you please extend them a bit from next time? Thanks for the post.

‘Are people really searching online for my product or services’.

When Page – Rank was patented the patent was assigned to Stanford University.

Forgetting to write for an audience is one of the biggest

mistakes that bloggers make. Webmaster follows a long process to promote a website in top search engines (Google, Yahoo and Bing).

my web page Aida

Here’s what Haugen has to say about the 99% pure oxygen in a can:.

Estimates placed the price of rooms in Kiev at double that of

staying within the Polish capital, Warsaw. A slight

change in the economy of a country is responsible for many changes in that country and it has lots to do with the other

countries with which it has got relation in any

form.

Because the admin of this web site is working, no doubt very quickly it will be

famous, due to its feature contents.

It is really a nice and useful piece of info. I am satisfied that you

shared this useful info with us. Please keep us informed like this.

Thanks for sharing.

Thanks designed for sharing such a nice opinion, paragraph is nice, thats why

i have read it entirely

Because the admin of this website is working, no uncertainty very shortly it will be renowned,

due to its quality contents.

CedarCide’s CedarShield wood therapy formula

penetrates the wooden, eradicating moisture and starting the petrification process.

Llevado a la seccion de Telefonia de dicho Corte Ingles,ya que mi mujer lo habia comprado alli, se hacen cargo del telefono,mandandolo al servicio tecnico de Samsung….esto lo hacen cuatro veces, viniendo el telefono NUNCA arreglado, es mas,la ultima vez que llego,fue delante justo del comercial y jefe de la seccion, que mostro su problema de reinicio…Dio la impresion que no me creian hasta que lo vieron ellos.

Wow! Finally I got a weblog from where I be capable of truly take useful

data concerning my study and knowledge.

vidcommx review

Definitely, what a magnificent website and informative posts, I surely will bookmark your blog.Have an awsome day!

JT Foxx

It is difficult to find good quality material, as that is expressed in this text. I hope that you continue to publish elements at this level and to keep it!

He de decir que me encanta este post. Llega a la mente y al corazón! Gracias por compartir tus reflexiones y tus conocimientos, tan enriquecedores.

you are in point of fact a excellent webmaster. The web site loading pace is incredible. It kind of feels that you are doing any distinctive trick. Also, The contents are masterwork. you’ve performed a fantastic job in this subject!

Great post here!

I will just say excellent! Very nice website.

You appear to know a lot about this. it is like you wrote the book on it or something. Guess I will just book mark this website. I enjoyed your write up. I think the admin of this web posts is really working hard in support of his web site, since here every material is quality based information.

A awesome article. I was looking everywhere and this popped up like nothing! I bookmarked it to my bookmark site list and will be checking back soon. Your blog is followed by my friend.

Hello, i believe that i noticed you visited my blog so i got here to return the favor?.I am attempting to find issues to improve my web site!I suppose

its ok to make use of a few of your ideas!!

I just lately came across your web site and also have been reading along.

Excellent post full of useful ideas! My website is relatively new and I am likewise having a hard time getting my readers to leave remarks.

Wonderfully written short article, if only all bloggers provided the exact same material as you, the internet would be a far much better location.

A incredibly remarkable blog post. Our experts are definitely happy for your article. You will definitely find a considerable amount of approaches after exploring your post. I was actually precisely looking for. Thanks for such post and also simply keep that up.

I certainly wished to compose a fast message to appreciate you for these special realities you are actually giving at this web site.

I will immediately nab your rss feed as I can’t find your email subscription link or newsletter service. Do you’ve any? Kindly permit me comprehend in order that I might simply subscribe. Thanks.

I certainly wanted to write a quick message to value you for these unique realities you are giving at this site.

This was a truly terrific contest and ideally I can participate in the next one. It was alot of enjoyable and I actually enjoyed myself.

My developer is trying to persuade me to move to .net from

PHP. I have always disliked the idea because of the costs.

But he’s tryiong none the less. I’ve been using WordPress

on a number of websites for about a year and am worried about switching to another platform.

I have heard great things about blogengine.net. Is there a way I can transfer all my wordpress

posts into it? Any help would be greatly appreciated!

Pansy was regarded as an end to love problems since its petals

were heart-shaped and was said to be one of many

ingredients in a Celtic love potion. If you move plants earlier

inside the fall chances are they’ll may have a couple of weeks of root growth to assist reestablish themselves, but this is not completely necessary

because the spring months will discover vigorous root growth occurring.

To send Next Day Flowers [Hannelore] with

an out-of-town memorial service it is possible

to make arrangements with all the local florist in that area and in many cases send

flowers based on the local customs and also on time before a service.

Some florists even charge extra whenever they learn your order is perfect

for being married (similar to a great many other vendors who feel as

though they can make do with pricing items higher for weddings).

Other names presented to it include Darling River rose,

cotton rosebush and Australian cotton. Once the buyer’s order is complete the cart(s) are wrapped and loaded onto trucks for shipping.

my blog – floral (Porter)

For newest information you have to go to see world wide web and on web I found this web site as a best web site for latest updates.

bookmarked!!, I like your website!

I was more than happy to find this web site. I wanted to thank you for ones time due to this wonderful read!!

I definitely enjoyed every little bit of it and I have you

saved as a favorite to check out new stuff on your website.

Hello there, just became aware of your blog through

Google, and found that it’s really informative. I’m going to watch out for brussels.

I will be grateful if you continue this in future. Numerous

people will be benefited from your writing.

Cheers!

Like this site– very easy to navigate and a lot of stuff to see!

I’d like to find out more? I’d want to find out more details.|

Having read this I believed it was extremely enlightening. I appreciate you finding the time and effort to put this article together. I once again find myself spending way too much time both reading and leaving comments. But so what, it was still worthwhile!

Nice Post And Very Helpful For Reader

Thanks

Hi tһere verү nice blog!! Guy .. Beautiful .. Superb ..

Ӏ’ll bookmark yоur site ɑnd taке the feeds also? I am satisfiewd to search ouut ɑ lot of usefᥙl informаtion rіght

here withіn tthe put up, we want develop extra strategies оn this regard, tһank you for sharing.

. . . . .

mү website; как стрелять с коллиматорным прицелом

13 Minute Affiliate system will be a wonderful and powerful

thing that starting from now has everything men and women need to start their particular own accomplice exhibiting groups running

from campaigns in order to pipes, to email-swipes, to be able to video-instructional activities, and entirely more.